AI-based end credits detection automation to boost viewer engagement

Choose to skip the inessential

If you are anything like us, you live fast. To squeeze a couple of extra hours in a day, you will have to find the ways of doing every single thing quickly. Even if it means constantly skipping such parts of your favourite series as opening and closing sequences. Or closing credits.

The services like Netflix, BBC iPlayer, and some others are already offering you to skip (or — in case of Netflix — giving no choice but skipping) the final part of the movie. But how accurately is it done? Does it really enhance your viewing experience?

We develop a full contextual understanding of video content by applying human-like machine learning (with such concepts as brain-inspired computer vision, probabilistic AI, machine perception and more). All that to understand the content thoroughly. To keep what’s essential and skip something that can be replaced with another good movie.

We develop a full contextual understanding of video content by applying human-like machine learning with such concepts as brain-inspired computer vision, probabilistic AI, machine perception, and more.

Our philosophy is to decode visual content for a machine. But what is more important — to make it filtered, suitable for a viewer to give them the options of how to engage with it. The EndCredits Detection Cloud AI which we will describe in this blog post, is a part of a comprehensive system, a virtual ‘robot’ that we are building.

Giving credit to credits sequence

Sometimes final credits are the inherent part of a film and can serve as some extra time to stay with that universe you’ve just been a part of. It’s not enough to simply cut end credits after ten seconds of their appearance on screen. It is very important to find a way to make it gently, to not ruin the viewer's experience by shoving yet another movie under their nose instantly.

We welcome the discussion!

To avoid this, we analyse the context of a scene thoroughly. Our system can tell when it is not advisable to switch to the next episode. The analysis algorithm we developed is able to tell when the intensity of the scene is still high (even if the names of the creators are already on screen) or when it will do little damage to skip.

That’s why we invented the two analysis modes that you can see below.

The ways of content analysis

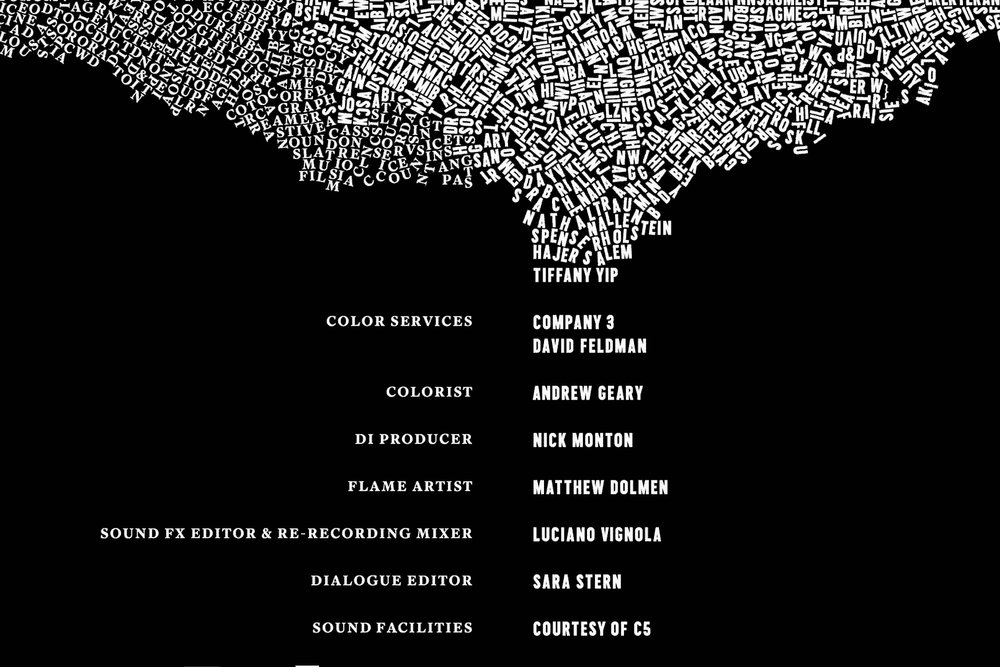

If you think of closing credits, the first that comes to mind is white-on-black text rolling out. This is — to the average viewer’s point of view — the least interesting and least amusing part of a movie. We introduce the Guaranteed mode for such purposes.

Guaranteed mode

We have developed the most precise way — with 99.99% accuracy — of detecting the parts of closing credits that are the least interesting from the viewer’s point of view. It can be strong traditional blocks of plain text rolling out very monotonously either across black background or some other visuals. Our algorithms ensure that by cutting this last part of end credits, the viewers won’t miss anything in their experience. This is the most safe way of securely switching to another movie or episode without losing the essential.

Guaranteed mode is the most precise way — with 99.99% accuracy — of detecting the least interesting (from the viewer’s standpoint) end credits’ parts.

Also, there’s another custom parameter that you can use. Namely, you can run the analysis to see if there are dynamical visuals (not just a static picture) on the background of the least intensive end credits part. By leveraging this parameter, you can choose to keep the content parts where a video is still rolling on a background.

Let us know if you want a demo!

This latest bit of the processing is the safest option of how to protect video content.

But modern closing credits are so much more than that. It is quite common that you can see a burst of animated graphics, names embedded into a picture or just the names of leading actors appearing really slowly before the traditional block starts. For such a set up, we offer you the Advanced mode.

Advanced mode

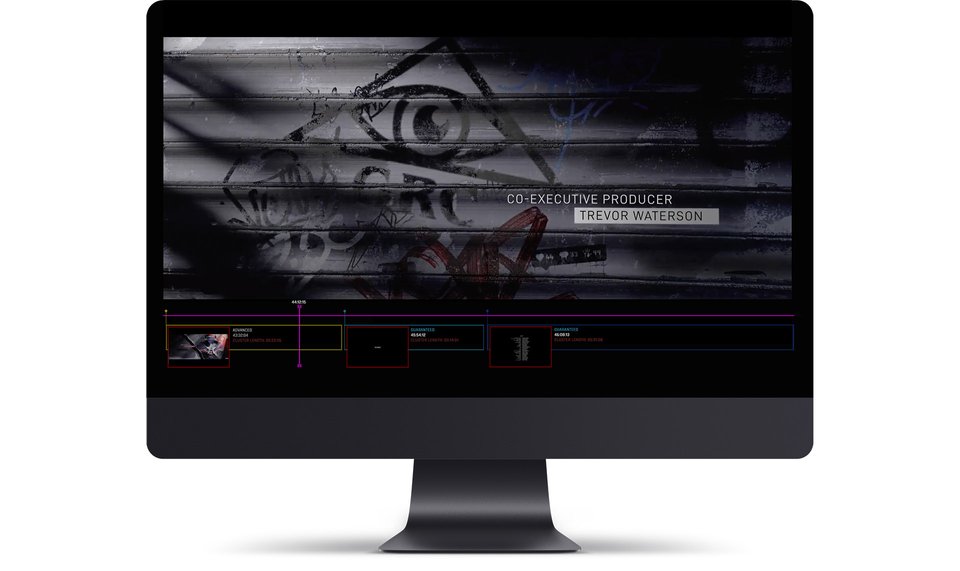

When you need to detect the end credits that are combined with the last bits of a movie, you are welcome to use the Advanced mode of our EndCredits Detection AIAAS. Such kind of credits seems to still be a part of a movie, but a viewer is about to leave without waiting for the least interesting bit (e.g. small white-on-black cast list) to begin.

Advanced mode allows you to detect the end credits that are combined with the last bits of movie visuals, before the least interesting end credits block starts.

If you wouldn’t want your viewers to lose such kind of content by recklessly cutting it off, you can prepare them for switching to another movie. In such a case, you will need to dig deeper and to define when a credits segment appears over visuals. Our system is able to do that leveraging our custom algorithms. This way, you let your viewers decide whether to leave for another episode before the least informative block starts or to keep watching.

For some cases, you might even want to cut this part of credits-over-visuals content. For example, to save some extra time, knowing that you won’t leave anything essential behind. With the help of our Cloud AI of course.

Here you can see the examples of the end credits detection in the Advanced mode and in the Guaranteed Mode:

Regardless of the EndCredits analysis mode you are using, you get just one timestamp as a result. Each mode will deliver one number that you can use for the system integration. The one that matches your audience retention tactics in the best way possible. It is that easy.

Regardless of the EndCredits analysis mode you are using, you get just one timestamp as a result.

How to detect closing credits in movies

There are many pieces of text information in movies (logos, in-frame text, ads, subtitles) and to just detect some letters in a frame is not enough. The logic we created helps us distinguish between movie credits and other pieces of text, leaving the later out.

The main approach here is the concepts of brain-inspired computer vision, probabilistic AI, machine perception and more to achieve the highest degree of intellectuality. We apply deep learning networks for creating high level representations to work with the visuals. With that our system ensures precise distinguishing between static and dynamic visuals, logos, and filters out the subtitles.

Unsupervised clustering is applied to make the segmentation of movie timeline. We also leverage that in our Automated Sports Highlights Generation AIAAS.

All that's left to an OTT platform after our tool detects the beginning of the closing credits — is to decide whether to engage a user with another movie of the same kind or let them skip to the next one.

By using these parameters, our tool works even on such specific cases as movie credits interrupted by another scene or post-credits scenes. All that's left to an OTT platform after our tool detects the beginning of the closing credits — is to decide whether to engage a user with another movie of the same kind or let them skip to the next one.

Why do you need end credits detection

As was already mentioned, our system understands video content on the scene level, just as a human would. But apart from that, it has some very tangible benefits that can ensure the seamless viewing experience for your subscribers.

For example, we can list the following gains:

- EndCredits Detection AIAAS supports any content type and any language. This means that the system is indifferent to whether it is a movie, series, animation or even a TV show. It will still detect the final credits accurately. Also, the AIAAS is language-agnostic and can detect credits written in any graphic scripts.

- It saves 8 hours out of every 100 hours of content watched. This is our calculation of how much time can be freed up for a viewer by integrating this feature into a content pipeline. This saves up to 90% of time lost.

- The system ensures the detection of end credits even on top of the visuals. Yet it is intelligent enough to know what kind of credits visuals are important to keep and what could be skipped.

- The system is fully ready for integration. Here you can find the API documentation that can help you understand EndCredits from a technical point of view. (API Reference)

- Our AIAAAS infrastructure is scalable over millions of movie frames daily. You won’t have to wait for the movies to upload one-by-one, ensuring batch processing.

- Our custom pipeline is adjustable to any industry needs. You can choose the parameter configurations you need for your specific use case. Yet, this high variability does not interfere with the end credits detection quality.

- The costs are fully transparent and depend on the volumes of input you want to process. Please contact us for more details.

Furthermore, if you already have a pipeline developed on your side that detects final credits, you can radically boost this feature’s accuracy and its intellectual level by using our AI.

The best way of leveraging EndCredits Detection AIAAS

If you are already convinced that you need to try our service, let me explain how to do it in the best way possible. If you are not, you can try the service for yourself right here.

For the optimal use of EndCredits Detection, please note that:

- The recommended video resolution is 720p. The resolution of lower quality can affect the detection accuracy. For example, credits won't be detected or will be detected later. At the same time, a higher resolution increases the processing time while the quality of the result doesn’t get better.

- We recommend to process only the last part of a movie to decrease the time spent for the analysis. For that, you will need to do some manipulations with a playlist on a middleware level.

- You are welcome to contact us for more detail in regards to that.

The human-like AI core (as a conclusion)

Typically, when the companies select a business case to resolve with the help of visual processing, they try to catch a very subjective event with the simplest processing, such as graphic detection, audio event, optical character recognition, etc. Then it is common to apply some straightforward rules (linear rules applied with if-then-else commands). All that blocks the flexibility and extensibility of their solutions.

Explore the power of Cognitive Computing!

What we are building is not just a solution to solve a single use case of the closing credits in isolation. We are creating a human-like video understanding system to slash hours of human work in the media industry. It operates like a virtual ‘robot’ intelligent enough to understand media patterns. And then decide upon video content on the scene level. It is extendable to any case from the industry. EndCredits Detection AIAAS — is just a composite part of that complex system.

We are creating a human-like video understanding system to slash hours of human work in the media industry.

Anticipating tomorrow's demand, we apply brain-inspired computer vision to multi-dimensional visual data. By that, we ensure a full contextual understanding of video content. The universal AI core can meet the industry needs in terms of scalability and cognitive performance enhancement.

We are enhancing this universal 'robot' (AI core) and developing new use cases for its application in the media and entertainment industry every single day. What we’ve already got is automated sports highlight generation, media anomaly detection, plagiarism and piracy check, ads detection and replacement, timeline/scene meta generation, EPG correction, and more. Some of those cases are already being researched or developed.

All in all, we believe that our combination of the most innovative tech based on deep knowledge of the bottlenecks you are facing can ensure a huge competitive advantage. But only to the ones brave enough to let human and machine collaboration enhance the level of content understanding in the Media and Entertainment Industry.